To create is human. For the past 300,000 years we’ve been unique in our ability to make art, cuisine, manifestos, societies: to envision and craft something new where there was nothing before.

Now we have company. While you’re reading this sentence, artificial intelligence (AI) programs are painting cosmic portraits, responding to emails, preparing tax returns, and recording metal songs. They’re writing pitch decks, debugging code, sketching architectural blueprints, and providing health advice.

Artificial intelligence has already had a pervasive impact on our lives. AIs are used to price medicine and houses, assemble cars, determine what ads we see on social media. But generative AI, a category of system that can be prompted to create wholly novel content, is much newer.

Buy a print of the AI cover here

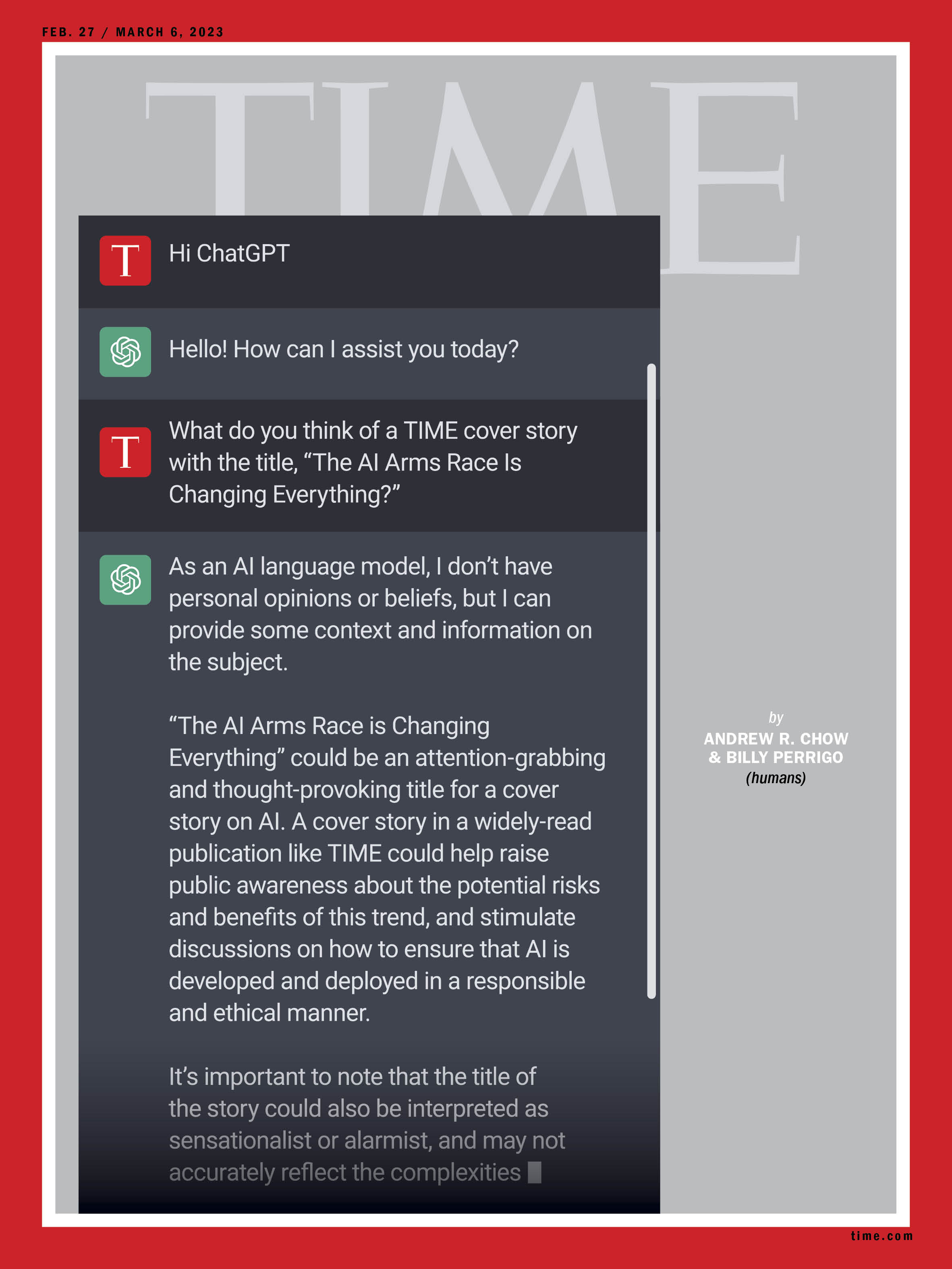

This shift marks the most important technological breakthrough since social media. Generative AI tools have been adopted ravenously in recent months by a curious, astounded public, thanks to programs like ChatGPT, which responds coherently (but not always accurately) to virtually any query, and Dall-E, which allows you to conjure any image you dream up. In January, ChatGPT reached 100 million monthly users, a faster rate of adoption than Instagram or TikTok. Hundreds of similarly astonishing generative AIs are clamoring for adoption, from Midjourney to Stable Diffusion to GitHub’s Copilot, which allows you to turn simple instructions into computer code.

Read More: The Creator of ChatGPT Thinks AI Should Be Regulated

Proponents believe this is just the beginning: that generative AI will reorient the way we work and engage with the world, unlock creativity and scientific discoveries, and allow humanity to achieve previously unimaginable feats. Forecasters at PwC predict that AI could boost the global economy by over $15 trillion by 2030.

This frenzy appeared to catch off guard even the tech companies that have invested billions of dollars in AI—and has spurred an intense arms race in Silicon Valley. In a matter of weeks, Microsoft and Alphabet-owned Google have shifted their entire corporate strategies in order to seize control of what they believe will become a new infrastructure layer of the economy. Microsoft is investing $10 billion in OpenAI, creator of ChatGPT and Dall-E, and announced plans to integrate generative AI into its Office software and search engine, Bing. Google declared a “code red” corporate emergency in response to the success of ChatGPT and rushed its own search-oriented chatbot, Bard, to market. “A race starts today,” Microsoft CEO Satya Nadella said Feb. 7, throwing down the gauntlet at Google’s door. “We’re going to move, and move fast.”

Wall Street has responded with similar fervor, with analysts upgrading the stocks of companies that mention AI in their plans and punishing those with shaky AI-product rollouts. While the technology is real, a financial bubble is expanding around it rapidly, with investors betting big that generative AI could be as market shaking as Microsoft Windows 95 or the first iPhone.

More from TIME

Read More: Fun AI Apps Are Everywhere Right Now. But a Safety ‘Reckoning’ Is Coming

But this frantic gold rush could also prove catastrophic. As companies hurry to improve the tech and profit from the boom, research about keeping these tools safe is taking a back seat. In a winner-takes-all battle for power, Big Tech and their venture-capitalist backers risk repeating past mistakes, including social media’s cardinal sin: prioritizing growth over safety. While there are many potentially utopian aspects of these new technologies, even tools designed for good can have unforeseen and devastating consequences. This is the story of how the gold rush began—and what history tells us about what could happen next.

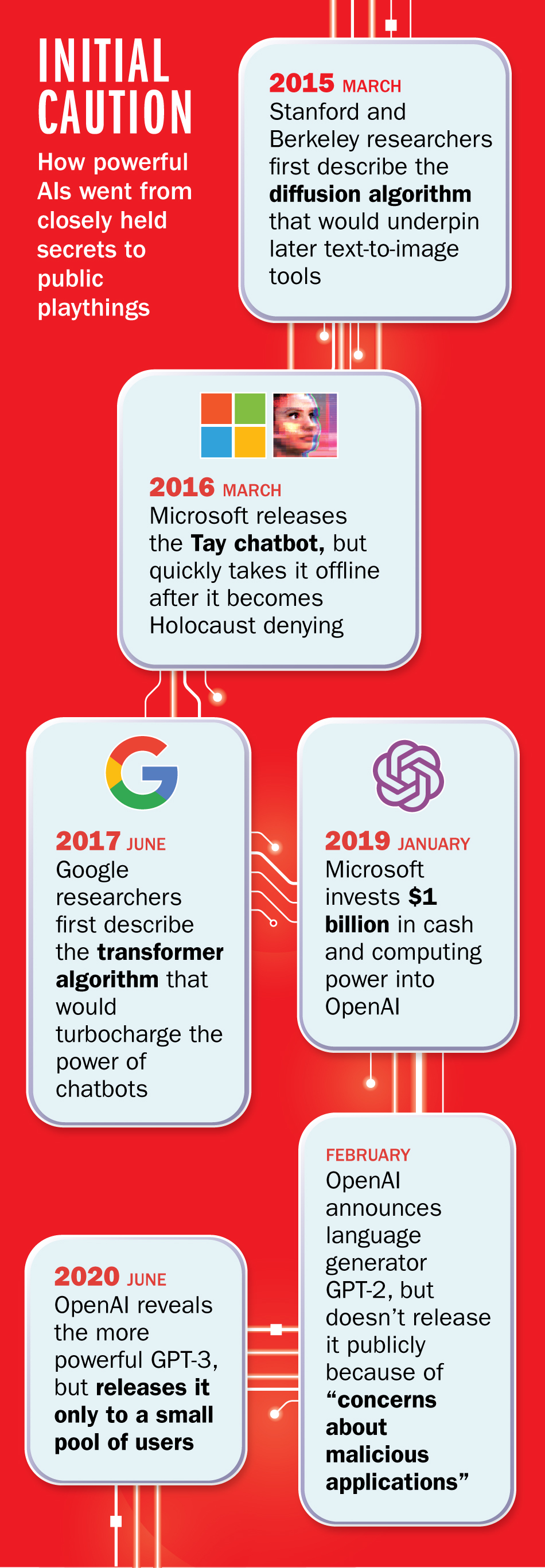

In fact, generative AI knows the problems of social media all too well. AI-research labs have kept versions of these tools behind closed doors for several years, while they studied their potential dangers, from misinformation and hate speech to the unwitting creation of snowballing geopolitical crises.

That conservatism stemmed in part from the unpredictability of the neural network, the computing paradigm that modern AI is based on, which is inspired by the human brain. Instead of the traditional approach to computer programming, which relies on precise sets of instructions yielding predictable results, neural networks effectively teach themselves to spot patterns in data. The more data and computing power these networks are fed, the more capable they tend to become.

In the early 2010s, Silicon Valley woke up to the idea that neural networks were a far more promising route to powerful AI than old-school programming. But the early AIs were painfully susceptible to parroting the biases in their training data: spitting out misinformation and hate speech. When Microsoft unveiled its chatbot Tay in 2016, it took less than 24 hours for it to tweet “Hitler was right I hate the jews” and that feminists should “all die and burn in hell.” OpenAI’s 2020 predecessor to ChatGPT exhibited similar levels of racism and misogyny.

Read More: OpenAI Used Kenyan Workers on Less Than $2 Per Hour to Make ChatGPT Less Toxic

The AI boom really began to take off around 2020, turbocharged by several crucial breakthroughs in neural-network design, the growing availability of data, and the willingness of tech companies to pay for gargantuan levels of computing power. But the weak spots remained, and the history of embarrassing AI stumbles made many companies, including Google, Meta, and OpenAI, mostly reluctant to publicly release their cutting-edge models. In April 2022, OpenAI announced Dall-E 2, a text-to-image AI model that could generate photorealistic imagery. But it initially restricted the release to a waitlist of “trusted” users, whose usage would, OpenAI said, help it to “understand and address the biases that DALL·E has inherited from its training data.”

Even though OpenAI had onboarded 1 million users to Dall-E by July, many researchers in the wider AI community had grown frustrated by OpenAI and other AI companies’ look-but-don’t-touch approach. In August 2022, a scrappy London-based startup named Stability AI went rogue and released a text-to-image tool, Stable Diffusion, to the masses. Releasing AI tools publicly would, according to a growing school of thought, allow developers to collect valuable data from users—and give society more time to prepare for the drastic changes advanced AI would bring.

Stable Diffusion quickly became the talk of the internet. Millions of users were enchanted by its ability to create art seemingly from scratch, and the tool’s outputs consistently achieved virality as users experimented with different prompts and concepts. “You had this generative Pandora’s box that opened,” says Nathan Benaich, an investor and co-author of the 2022 State of AI Report. “It shocked OpenAI and Google, because now the world was able to use tools that they had gated. It put everything on overdrive.”

OpenAI quickly followed suit by throwing open the doors to Dall-E 2. Then, in November, it released ChatGPT to the public, reportedly in order to beat out looming competition. OpenAI CEO Sam Altman emphasized in interviews that the more people used AI programs, the faster they would improve.

Users immediately flocked to both OpenAI and its competitors. AI-generated images flooded social media and one even won an art competition; visual effects artists for movies began using AI-assisted software for Hollywood hits like Everything Everywhere All at Once. Architects are devising AI blueprints; coders are writing AI-based scripts; publications are releasing AI quizzes and articles. Venture capitalists took notice, and have thrown over a billion dollars at AI companies that might unlock the next great productivity boost. Chinese tech giants Baidu and Alibaba announced chatbots of their own, boosting their share prices.

Microsoft, Google, and Meta, meanwhile, are taking the frenzy to extreme levels. While each has stressed the importance of AI for years, they all appeared surprised by the dizzying surge in attention and usage—and now seem to be prioritizing speed over safety. In February, Google announced plans to release its ChatGPT rival Bard, and according to the New York Times said in a presentation that it will “recalibrate” the level of risk it is willing to take when releasing tools based on AI technology. And in Meta’s recent quarterly earnings call, CEO Mark Zuckerberg declared his aim for the company to “become a leader in generative AI.”

Read More: AI Helped Write This Play. It May Contain Racism

In this rush, mistakes and harms from the tech have risen—and so has the backlash. When Google demonstrated Bard, one of its responses contained a factual error about the Webb Space Telescope—and Alphabet’s stock cratered immediately after. Microsoft’s Bing is also prone to returning false results. Deepfakes—realistic yet false images or videos created with AI—are being used to harass people or spread misinformation: one widely shared video showed a shockingly convincing version of Joe Biden condemning transgender people.

Companies including Stability AI are facing lawsuits from artists and rights holders who object to their work being used to train AI models without permission. And a TIME investigation found that OpenAI used outsourced Kenyan workers who were paid less than $2 an hour to review toxic content, including sexual abuse, hate speech and violence.

As worrying as these current issues are, they pale in comparison with what could emerge next if this race continues to accelerate. Many of the choices being made by Big Tech companies today mirror those they made in previous eras, which had devastating ripple effects.

Social media—the Valley’s last truly world-changing innovation—carries the first valuable lesson. It was built on the promise that connecting people would make our societies healthier and individuals happier. More than a decade later, we can see that its failures came not from that welcome connectedness, but the way tech companies monetized it: by slowly warping our news feeds to optimize for engagement, keeping us scrolling through viral content interspersed with targeted online advertising. True social connection has become increasingly sparse on our feeds. At the same time, our societies have been left to deal with the second-order implications: a gutted news business, a rise in misinformation, and a skyrocketing teen mental-health crisis.

It’s not hard to see AI’s integration into Big Tech products going down the same road. Alphabet and Microsoft are most interested in how AI will make their search engines more valuable, and have shown demos of Google and Bing in which the first results users see are AI-created. But Margaret Mitchell, chief ethics scientist at the AI-development platform Hugging Face, argues that search engines are the “absolute worst way” to use generative AI, because it gets things wrong so often. Mitchell says the actual strengths of AIs like ChatGPT—assisting with creativity, ideation, and menial tasks—are being sidelined in favor of shoehorning the technology into moneymaking machines for tech giants.

Read More: AI Chatbots Are Getting Better. But an Interview With ChatGPT Reveals Their Limits

If search engines successfully integrate AI, that subtle shift could decimate the many businesses that rely on search, either for ad traffic or business referrals. Nadella, Microsoft’s CEO, has said the new AI-oriented Bing search engine will drive more traffic, and therefore revenue, to publishers and advertisers. But like the brewing pushback against AI-generated art, many in the media now fear a future where tech giants’ chatbots cannibalize content from news sites, providing nothing in return.

The question of how AI companies will monetize their projects also looms large. For now, most are free to use, because their creators are following the Silicon Valley playbook of charging little or nothing for products to crowd out competition, subsidized by huge investments from venture-capital firms. While unsuccessful companies adopting this strategy slowly bleed money, the winners often end up with vicelike grips on markets they can control as they see fit. Right now, ChatGPT is ad-less and free to use. It’s also burning a hole in OpenAI’s pocketbook: each individual chat costs the company “single-digit cents,” according to its CEO. The company’s ability to weather huge losses right now, thanks partly to Microsoft’s largesse, gives it a huge competitive advantage.

In February, OpenAI brought in a $20 monthly charge for a subscription tier of the chatbot. Google already prioritizes paid ads in search results. It’s not much of a leap to imagine it doing the same with AI-generated results. If humans come to rely on AIs for information, it will be increasingly difficult to tell what is factual, what is an ad, and what is completely made up.

As profit takes precedence over safety, some technologists and philosophers warn of existential risk. The explicit goal of many of these AI companies—including OpenAI—is to create an Artificial General Intelligence, or AGI, that can think and learn more efficiently than humans. If future AIs gain the ability to rapidly improve themselves without human guidance or intervention, they could potentially wipe out humanity. An oft-cited thought experiment is that of an AI that, following a command to maximize the number of paperclips it can produce, makes itself into a world-dominating superintelligence that harvests all the carbon at its disposal, including from all life on earth. In a 2022 survey of AI researchers, nearly half of the respondents said that there was a 10% or greater chance that AI could lead to such a catastrophe.

Inside the most cutting-edge AI labs, a few technicians are working to ensure that AIs, if they eventually surpass human intelligence, are “aligned” with human values. They are designing benevolent gods, not spiteful ones. But only around 80 to 120 researchers in the world are working full-time on AI alignment, according to an estimate shared with TIME by Conjecture, an AI-safety organization. Meanwhile, thousands of engineers are working on expanding capabilities as the AI arms race heats up.

“When it comes to very powerful technologies—and obviously AI is going to be one of the most powerful ever—we need to be careful,” Demis Hassabis, CEO of Google-owned AI lab DeepMind, told TIME late last year. “Not everybody is thinking about those things. It’s like experimentalists, many of whom don’t realize they’re holding dangerous material.”

Even if computer scientists succeed in making sure the AIs don’t wipe us out, their increasing centrality to the global economy could make the Big Tech companies who control it vastly more powerful. They could become not just the richest corporations in the world—charging whatever they want for commercial use of this critical infrastructure—but also geopolitical actors to rival nation-states.

Read More: Big Tech Hasn’t Fixed AI’s Misinformation Problem—Yet

The leaders of OpenAI and DeepMind have hinted that they’d like the wealth and power emanating from AI to be somehow redistributed. The Big Tech executives who control the purse strings, on the other hand, are primarily accountable to their shareholders.

Of course, many Silicon Valley technologies that promised to change the world haven’t. We’re not all living in the metaverse. Crypto bros who goaded nonadopters to “have fun staying poor” are nursing their losses or even languishing behind prison bars. The streets of cities around the world are littered with the detritus of failed e-scooter startups.

But while AI has been subject to a similar level of breathless hype, the difference is that the technology behind AI is already useful to consumers and getting better at a breakneck pace: AI’s computational power is doubling every six to 10 months, researchers say. It is exactly this immense power that makes the current moment so electrifying—and so dangerous.

—With reporting by Leslie Dickstein and Mariah Espada

Correction, March 8:

The original version of this story misstated how AI-assisted software was used on the film “Everything Everywhere All at Once.” It was used by the film’s visual effects artists, not its editors.

More Must-Reads From TIME

- The 100 Most Influential People of 2024

- How Far Trump Would Go

- Scenes From Pro-Palestinian Encampments Across U.S. Universities

- Saving Seconds Is Better Than Hours

- Why Your Breakfast Should Start with a Vegetable

- 6 Compliments That Land Every Time

- Welcome to the Golden Age of Ryan Gosling

- Want Weekly Recs on What to Watch, Read, and More? Sign Up for Worth Your Time

Write to Billy Perrigo at billy.perrigo@time.com