“Step up to the camera.” an airport gate agent commanded, pointing me to move my body closer to a kiosk set up to scan the faces of passengers boarding the aircraft. I hesitated. The flight was already heavily delayed, and I was due to attend the DVF awards in Venice. I felt conflicted. Impatient travelers were queuing up behind me adding social pressure to comply. Loss of opportunity was also part of my mental calculus. If I was viewed as a troublemaker, I might miss the flight all together and my opportunity to meet a number of personal heroes. If I followed the command to scan my face, I would be counted among passengers who consented to use facial recognition at an airport even though I have actively campaigned against coercive uses of the technology. I had mere seconds to make a decision and a significant amount of money and time already allocated to this trip: economic pressure.

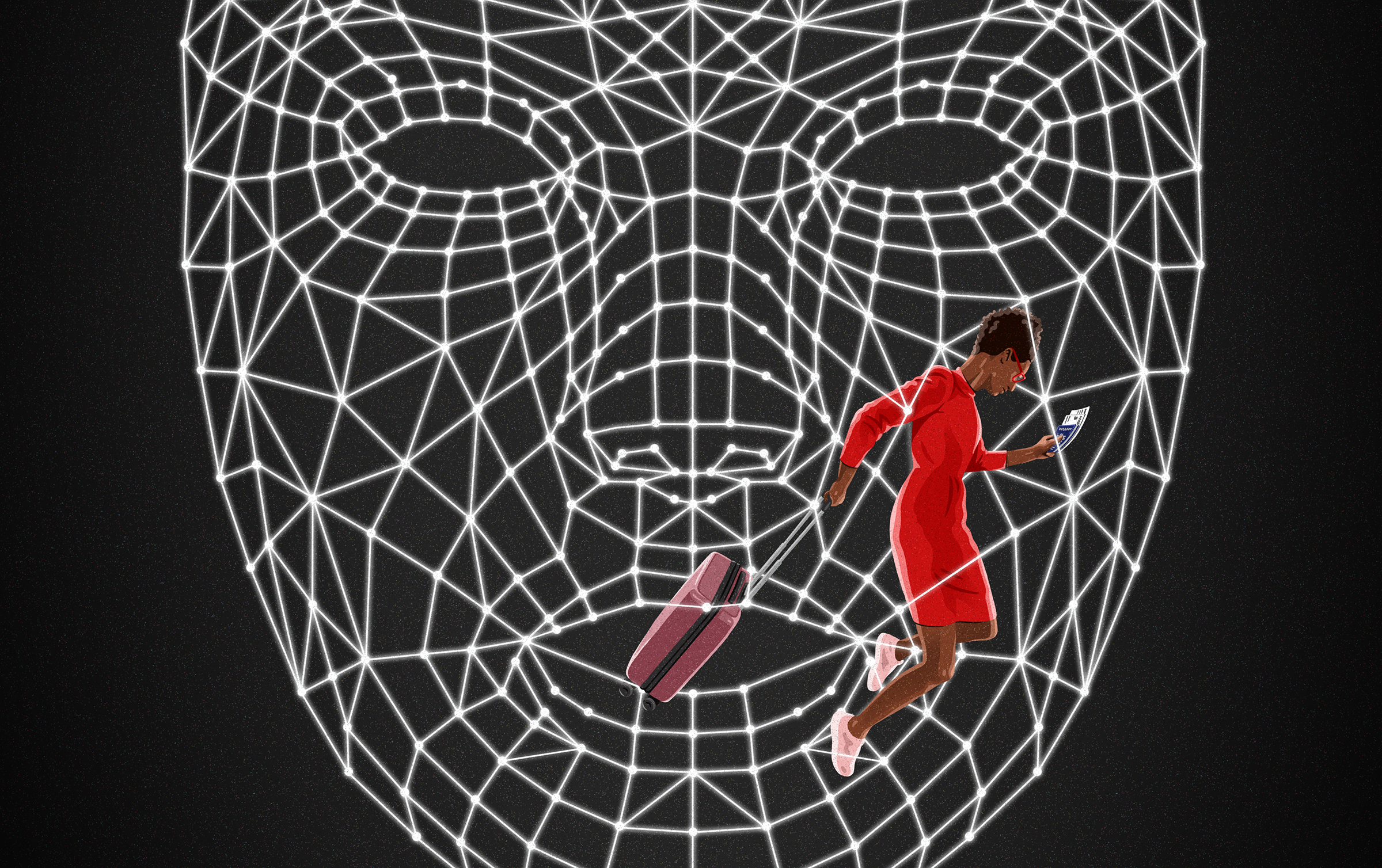

As an AI researcher, I have studied the privacy risks and dangerous law enforcement uses of AI- powered facial recognition while publishing widely read research on racial and gender bias in AI systems that scan faces from tech giants including Amazon, IBM, and Microsoft. I have written about data breaches and data overreaches from government contractors. As the founder of the Algorithmic Justice League (AJL), I have testified in front of congress on the need for federal laws on invasive use of facial recognition technologies. Beyond airports this technology is infiltrating multiple aspects of everyday life including having people scan their faces to access government services, take important examinations, and access emergency health services. Chillingly, false arrests linked to facial recognition misidentification continue, one of the most recent this year involved Porcha Woodruff who was arrested while 8 months pregnant for a carjacking she did not commit. She didn’t even fit the description given by a witness. Porcha reported having contractions while sitting in a holding cell and was rushed to the emergency room upon her release. Over reliance on AI imperiled two precious lives.

Adding even more pressure to the situation, earlier this summer at a Presidential AI Roundtable convened by the White house, face-to-face with President Biden, I urged him to prioritize biometric rights and protections as AI systems infiltrate more aspects of our lives: pressure to walk the talk.

Would I prioritize speaking up for biometric rights or board the plane without disturbing the peace? Would I risk losing my chance to share my forthcoming book with Deputy Secretary General of the United Nations Anima J Mohammed and human rights lawyer Amal Clooney, and other luminaries gathered for the star studded ceremony? Besides reading up on the guest list for the event, I had also read the policies of CBP which govern international flights. On paper, I knew I had a right to opt out. But I also know those rights are seldom made widely known and that they do not extend to passengers who are not U.S. citizens.

While this experience focused on an international flight, airlines and the TSA use the number of people whose faces are scanned to make claims about the overall public acceptance of their domestic face scanning activities. Based on what I and many other travelers have witnessed including Senator Merkley who joined Senators Markey, Booker, Warren, and Sanders to send a letter demanding TSA to provide more information on their use of facial recognition technologies, there is ample reason that consent numbers do not reflect the choices of well-informed travelers. Claims about the accuracy of these systems could also breach FTC deceptive practice guidelines if third parties show the systems being used encode racial, gender, or age bias. At the time of writing the TSA has not shared the demographic accuracy of the tools they are using or even the vendors they are utilizing. This lack of transparency does not inspire confidence. And regardless of accuracy, coercive consent to comply with face scans must not be allowed to continue at airports.

Returning to the choice ahead of me, I looked around for signage to see if anything indicated to other travelers that they could opt out. Government policy claims signage with clear language is provided. The only sign I saw was unhelpfully placed on a wall partially blocked by the gate agents. I could barely see the sign fully, yet alone read everything on by the time I got to the face scanning camera. The signage mentioned biometric identity without explicitly saying facial recognition. How many travelers even know what that means? According to reports we have received at the Algorithmic justice league (AJL) of 191 travelers who completed our airport survey this summer, 89% reported they did not see signage about facial recognition. Only 1.6% said they were told they could opt out. Coincidently Delta in piloting facial recognition for boarding planes said only 2 percent of passengers opted out.

Would I walk the talk? I asked ask in a loud voice in hopes other passengers could hear me “I would like to opt out of facial recognition..I am attempting to opt out?” The gate agent did not answer me. Instead, they asked to look at my passport. After a quick glance at the document and my ticket they let me board the plane. Then they continued to tell passengers behind me to step up to the camera. No traveler should be made to feel like they have to give up their valuable biometric data. All gate agents should verbally tell passengers they have a right to opt out instead of relying solely on ill placed signage.

The TSA has shared plans to expand their facial recognition pilot to 400 airports and airlines like Delta and American Airlines are using facial recognition to board flights. Travels must have real choice and both companies and government agencies should face penalties if they coerce travelers to give up face data. Travelers must also have a right to have all face data held by airlines purged. Facebook/Meta under the pressure for a class action lawsuit deleted 1 billion faceprints showing data purges are possible. It’s not too late to make sure that the face is not the final frontier of privacy.

Joy Buolamwini, the founder of the Algorithmic Justice League, is the author of Unmasking AI: My Mission to Protect What Is Human in a World of Machines

- The 100 Most Influential People of 2024

- How Far Trump Would Go

- Why Maternity Care Is Underpaid

- Scenes From Pro-Palestinian Encampments Across U.S. Universities

- Saving Seconds Is Better Than Hours

- Why Your Breakfast Should Start with a Vegetable

- Welcome to the Golden Age of Ryan Gosling

- Want Weekly Recs on What to Watch, Read, and More? Sign Up for Worth Your Time