I cringe at being called “Mother of the Cloud," but having been part of the development and implementation of the internet and networking industry—as an entrepreneur, CTO of Cisco, and on the boards of Disney and FedEx—I am fortunate to have had a 360-degree view of the technologies that are at the foundation of our modern world.

I have never had such mixed feelings about technological innovation. In stark contrast to the early days of internet development, when many stakeholders had a say, discussions about AI and our future are being shaped by leaders who seem to be striving for absolute ideological power. The result is “Authoritarian Intelligence.” The hubris and determination of tech leaders to control society is threatening our individual, societal, and business autonomy.

What is happening is not just a battle for market control. A small number of tech titans are busy designing our collective future, presenting their societal vision, and specific beliefs about our humanity, as theonly possible path. Hiding behind an illusion of natural market forces, they are harnessing their wealth and influence to shape not just productization and implementation of AI technology, but also the research.

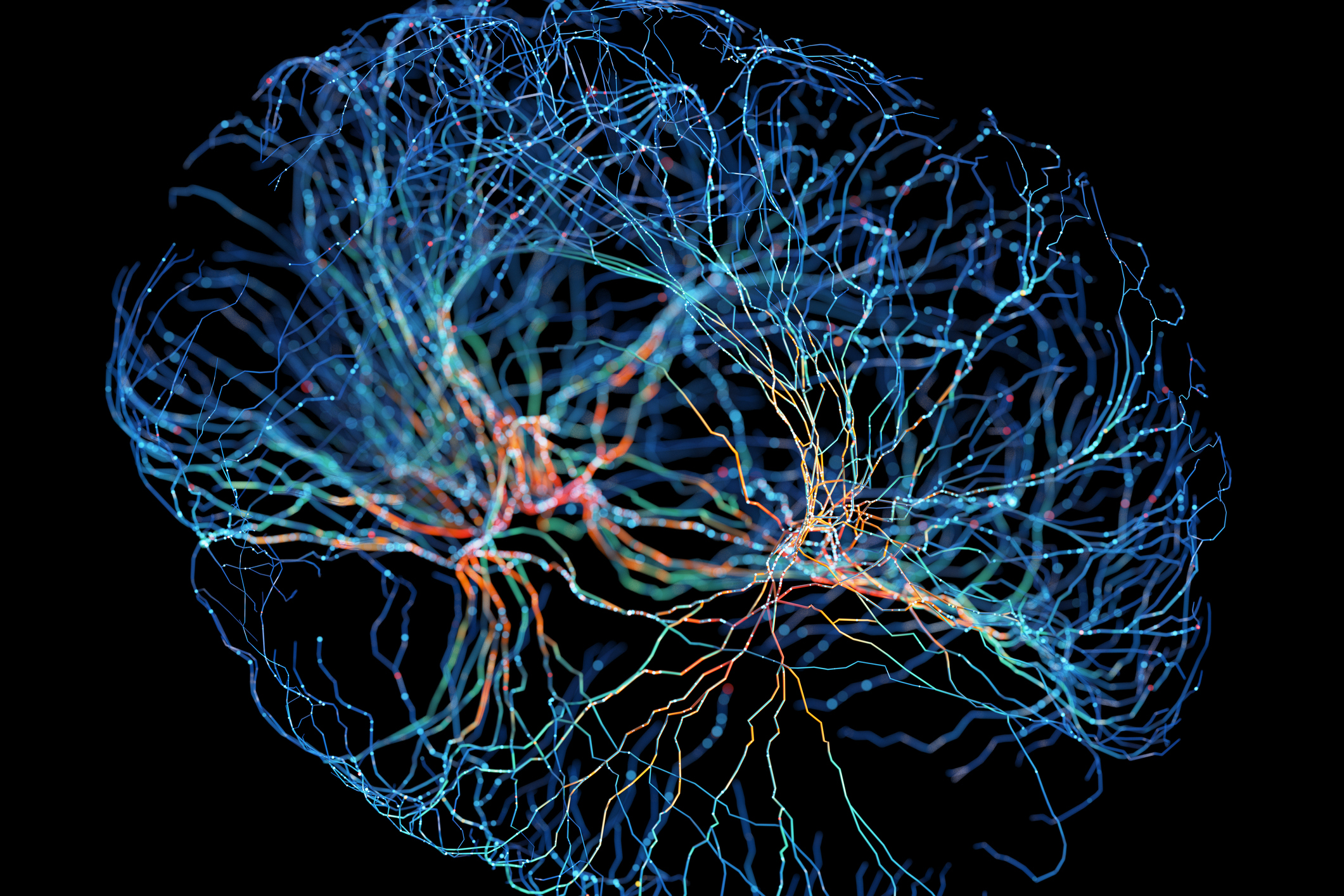

Artificial Intelligence is not just chat bots, but a broad field of study. One implementation capturing today’s attention, machine learning, has expanded beyond predicting our behavior to generating content—called Generative AI. The awe of machines wielding the power of language is seductive, but Performative AI might be a more appropriate name, as it leans toward production and mimicry—and sometimes fakery—over deep creativity, accuracy, or empathy.

The very fact that the evolution of technology feels so inevitable is evidence of an act of manipulation, an authoritarian use of narrative brilliantly described by historian Timothy Snyder. He calls out the politics of inevitability “...a sense that the future is just more of the present, ... that there are no alternatives, and therefore nothing really to be done.” There is no discussion of underlying values. Facts that don’t fit the narrative are disregarded.

Read More: AI's Long-term Risks Shouldn't Makes Us Miss Present Risks

Here in Silicon Valley, this top-down authoritarian technique is amplified by a bottom-up culture of inevitability. An orchestrated frenzy begins when the next big thing to fuel the Valley’s economic and innovation ecosystem is heralded by companies, investors, media, and influencers.

They surround us with language coopted from common values—democratization, creativity, open, safe. In behavioral psych classes, product designers are taught to eliminate friction—removing any resistance to us to acting on impulse.

The promise of short-term efficiency, convenience, and productivity lures us. Any semblance of pushback is decried as ignorance, or a threat to global competition. No one wants to be called a Luddite. Tech leaders, seeking to look concerned about the public interest, call for limited, friendly regulation, and the process moves forward until the tech is fully enmeshed in our society.

We bought into this narrative before, when social media, smartphones and cloud computing came on the scene. We didn’t question whether the only way to build community, find like-minded people, or be heard, was through one enormous “town square,” rife with behavioral manipulation, pernicious algorithmic feeds, amplification of pre-existing bias, and the pursuit of likes and follows.

It’s now obvious that it was a path towards polarization, toxicity of conversation, and societal disruption. Big Tech was the main beneficiary as industries and institutions jumped on board, accelerating their own disruption, and civic leaders were focused on how to use these new tools to grow their brands and not on helping us understand the risks.

We are at the same juncture now with AI. Once again, a handful of competitive but ideologically aligned leaders are telling us that large-scale, general-purpose AI implementations are the only way forward. In doing so, they disregard the dangerous level of complexity and the undue level of control and financial return to be granted to them.

While they talk about safety and responsibility, large companies protect themselves at the expense of everyone else. With no checks on their power, they move from experimenting in the lab to experimenting on us, not questioning how much agency we want to give up or whether we believe a specific type of intelligence should be the only measure of human value.

The different types and levels of risks are overwhelming, and we need to focus on all of them: the long-term existential risks, and the existing ones. Disinformation, supercharged by deep fakes, data privacy issues, and biased decision making continue to erode trust—with few viable solutions. We do not yet fully understand risks to our society at large such as the level and pace of job loss, environmental impacts, and whether we want opaque systems making decisions for us.

Deeper risks question the very aspects of humanity. When we prioritize “intelligence” to the exclusion of cognition, might we devolve to become more like machines? On the current trajectory we may not even have the option to weigh in on who gets to decide what is in our best interest. Eliminating humanity is not the only way to wipe out our humanity.

Human well-being and dignity should be our North Star—with innovation in a supporting role. We can learn from the open systems environment of the 1970s and 80s. When we were first developing the infrastructure of the internet, power was distributed between large and small companies, vendors and customers, government and business. These checks and balances led to better decisions and less risk.

AI everything, everywhere, all at once, is not inevitable, if we use our powers to question the tools and the people shaping them. Private and public sector leaders can slow the frenzy through acts of friction; simply not giving in to the “Authoritarian Intelligence” emanating out of Silicon Valley, and our collective group think.

We can buy the time needed to develop impactful national and international policy that distributes power and protects human rights, and inspire independent funding and ethics guidelines for a vibrant research community that will fuel innovation.

With the right priorities and guardrails, AI can help advance science, cure diseases, build new industries, expand joy, and maintain human dignity and the differences that make us unique.

More Must-Reads from TIME

- How Donald Trump Won

- The Best Inventions of 2024

- Why Sleep Is the Key to Living Longer

- Robert Zemeckis Just Wants to Move You

- How to Break 8 Toxic Communication Habits

- Nicola Coughlan Bet on Herself—And Won

- Why Vinegar Is So Good for You

- Meet TIME's Newest Class of Next Generation Leaders

Contact us at letters@time.com