Long ago computers and machines began to replace blue collar jobs but as creatives we thought we were safe. Have we now reached the tipping point when computers can replace even the photo editor?

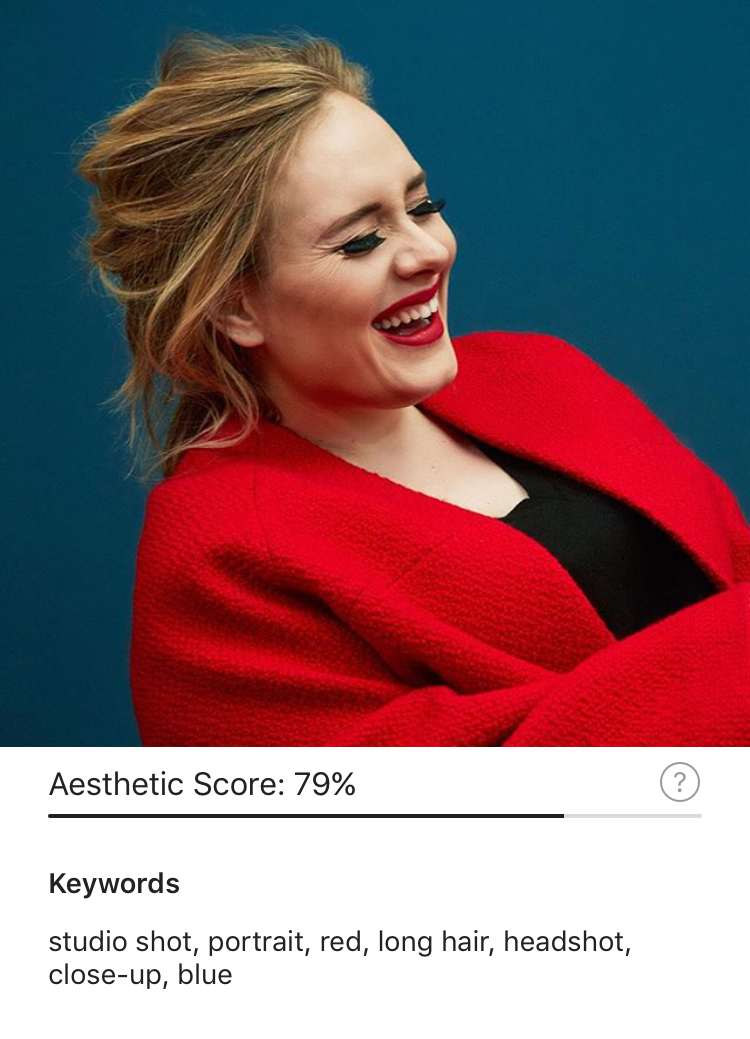

In recent months, EyeEm, an image-sharing platform, has been working on algorithms that, it says, will “augment” the work of photo editors. One algorithm analyzes pictures to determine what is in them, using deep learning to recognize thousands of concepts – from objects to colors and even emotions. The other algorithm references a database of millions of curated images to determine the quality of a photograph and give each one an “Aesthetic Score.” All of this is put together into EyeEm’s app The Roll which analyzes user’s camera rolls on their smartphones to identify, rank, and sort each image.

We decided to put this algorithm to the test, pitting it against TIME’s photo editors. To do that, we took the 20 most liked images on TIME’s Instagram feed and had our photo editors rate each one. We also ran the photos through EyeEm’s computer algorithm for comparison.

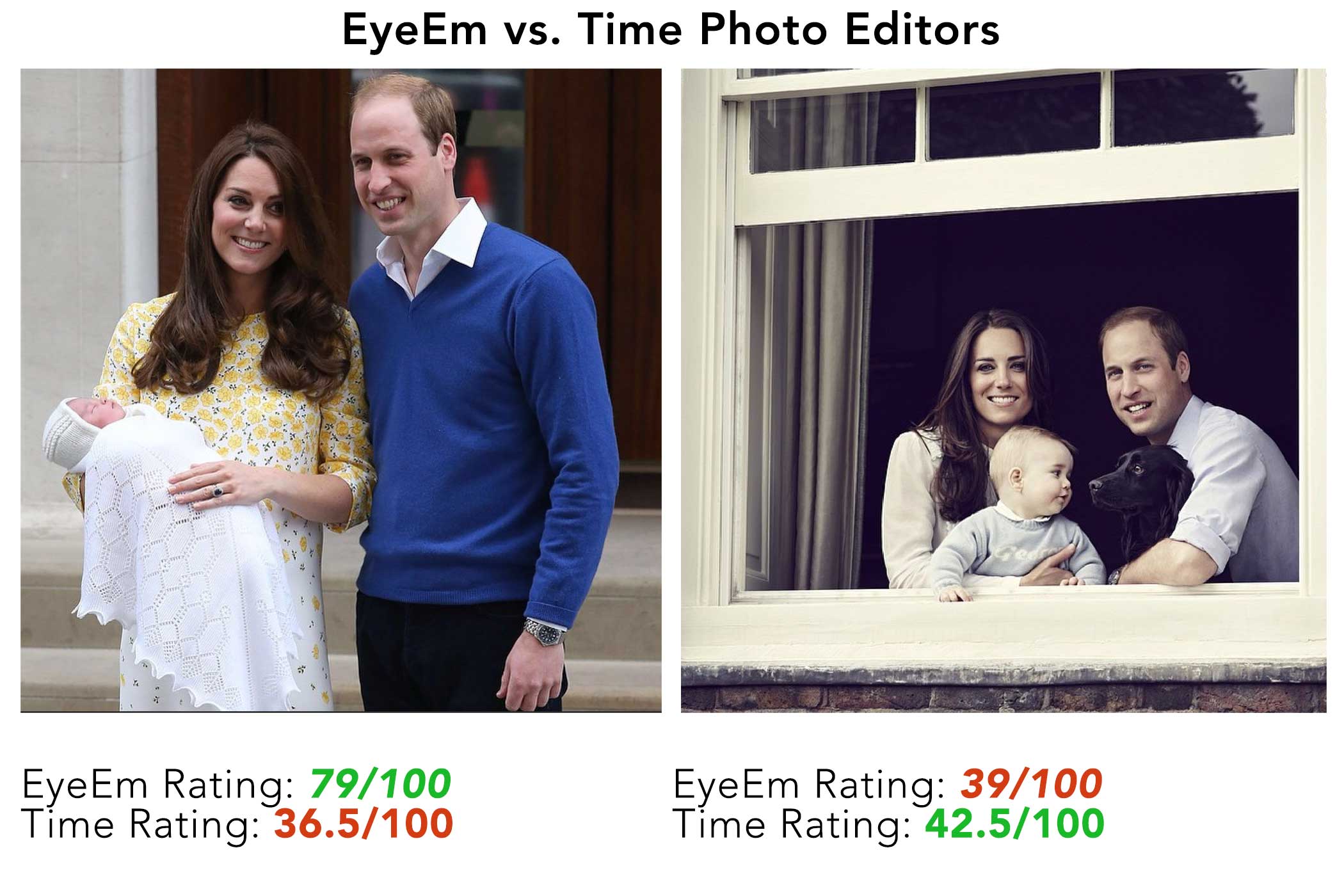

In looking at the selected images, TIME’s 3 million Instagram followers have a clear preference for celebrity, natural disasters and, of course, cute cats. TIME’s photo editors, while displaying an affinity for portraiture, took celebrity much less into account. Finally, the EyeEm algorithm was able to identify people in images, but wasn’t able to identify who they were, effectively discounting the celebrity factor. Instead it tended to rate, favorably, bright colors like fiery reds and icy blues over portraits.

For example, the photo below of Kate Middleton, Prince Williams and their baby was deemed, by EyeEm’s algorithm and TIME’s photo editors, as being passable, with an average rating of 40/100. Yet, another image of the couple received a 79/100 rating, while the photo editors rated it at a low 36.5/100. The difference is clear: while the first image might be better composed, the other displays brighter colors which EyeEm factored in.

So, can EyeEm’s algorithms replace photo editors? Co-founder and CTO Ramzi Rizk notes that the algorithms are growing smarter every day “so at some point photo editors have to sleep and the algorithm doesn’t.” However, he points out “we’re not trying to replace photo editors. We’re trying to augment their work. We’re trying to help them go through this mountain of content and just limit it to the content that fits their criteria. What we’re trying to do is we’re trying to map trends. So as the trends evolve, we need to evolve with them.”

For now the computer is objectively good, but it is important to point out that subjectivity is what makes each photo editor distinctive. This is even more important when faced with a gallery or series of images where the editor is balancing complex sequencing decisions based on visual similarities or dissimilarities.

Images also have a feeling that is nearly impossible to quantify. It’s something that goes beyond color, contrast, composition, or subject matter. It’s the thing that separates a good from a great photograph, where location, timing, skill, and luck all work in the photographer’s favor.

EyeEm might eventually excel at choosing the strongest social images, or avoiding sensitive images that are not suited for publication. It might even learn based on an editor’s preferences. But can subjectivity really be taught and replicated?

Josh Raab is an Associate Photo Editor at TIME. Follow him on Instagram and Twitter.

More Must-Reads From TIME

- The 100 Most Influential People of 2024

- Coco Gauff Is Playing for Herself Now

- Scenes From Pro-Palestinian Encampments Across U.S. Universities

- 6 Compliments That Land Every Time

- If You're Dating Right Now , You're Brave: Column

- The AI That Could Heal a Divided Internet

- Fallout Is a Brilliant Model for the Future of Video Game Adaptations

- Want Weekly Recs on What to Watch, Read, and More? Sign Up for Worth Your Time

Contact us at letters@time.com