New research announced by IBM Tuesday could pave the way for more powerful, energy efficient computers that use processors based on carbon rather than today’s silicon chips.

That shift is important because making faster computer processors generally means increasing the number of transistors on a chip. That, in turn, means making transistors as small as possible, so that more of them can be loaded into a given surface area. But chipmakers appear to be approaching the physical limits of how small they can make silicon transistors without sacrificing performance. That’s caused some observers to argue we’re approaching the end of Moore’s Law, a decades-old theory that holds the number of transistors in a circuit will roughly double every two years. Moore’s Law is an important axiom among hardware companies, who lean on the rule’s reliability to plan future products.

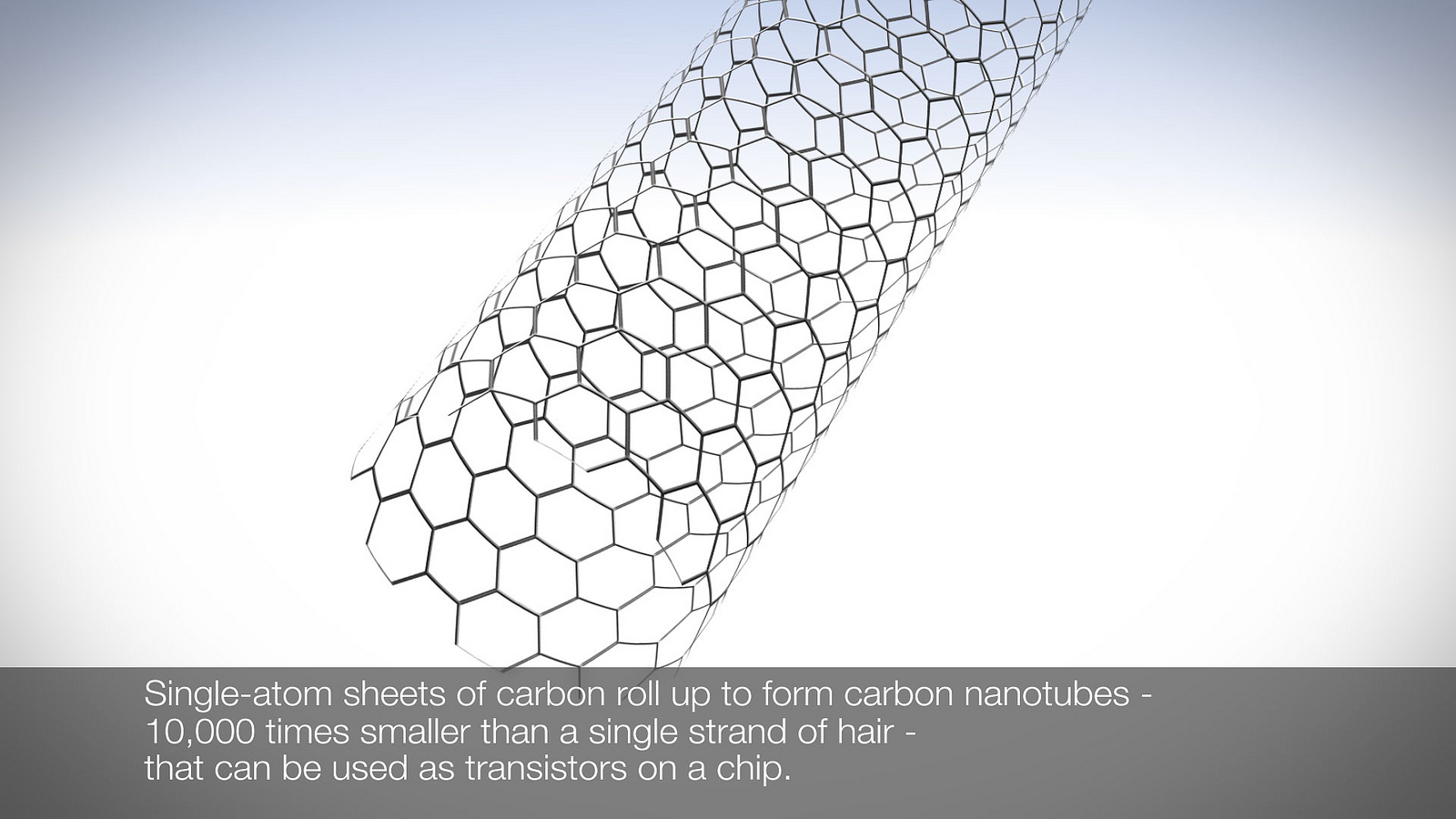

IBM’s new research, to be published Friday in the journal Science, suggests that chipmakers can beat the physical limitations of silicon by switching to “carbon nanotubes,” essentially one-atom sheets of carbon rolled into a cigarette-like shape:

Chipmakers have long considered carbon promising because electrons can travel through it about ten times faster than they can pass through silicon, ultimately resulting in better computing performance. But carbon has shared a big problem with silicon. Transistors, which are essentially an electric switch, require contacts that allow electricity to flow through them. For both silicon and carbon, making smaller transistors has meant increasing the electrical resistance in the contacts, translating into worsened performance.

IBM’s researchers now say they’ve overcome that hurdle by rethinking how contacts are made, switching to a method the company says is akin to “microscopic welding.” Through that process, metal atoms are chemically bound to carbon atoms at the ends of the nanotubes, allowing for much smaller processors without sacrifices in performance.

“This is really a 10 in importance,” says Wilfried Haensch, Senior Manager of Physics & Materials for Logic and Communications for IBM Research and one of the report’s co-authors.

Haensch explains the innovation through a parking garage metaphor: Imagine you drive into a garage and wind up parking too close to the cars on either side of you. “To get out of the door, you have to do contortions and it’s very difficult, and you would wish there would be more space.” One possible solution, Haensch says, would be to make parking spots wider—but that would mean the garage couldn’t hold as many cars. “But you can attack the problem from a different side, too. Why don’t we redesign the cars to use sliding doors? So this means there’s no requirement to have a large distance between adjacent cars. As a result, you can pack more cars in the parking garage.”

In Haensch’s metaphor, the cars represents the transistor, while its doors are the contacts. “We changed the architecture of the contacts, which means we went from hinged doors to sliding doors,” he says. “And this allows us to be independent from the contact resistance, or the difficulty of the electrons to get into the device, independent of the contact size. So this is really the breakthrough here.”

All this could mean further delays in the death of Moore’s Law, especially in combination with other recent IBM research into ever-smaller silicon transistors. “This means that we’ve found another turn of the crank in Moore’s Law,” claims Haensch.

This new innovation could help IBM’s business. The storied computing company gave up on its PC business ten years ago, selling it to China’s Lenovo for $1.25 billion. Since then, it’s been focused on cloud computing, big data and the Internet of Things. Smaller, faster processors based on carbon nanotube technology could give Big Blue a big advantage in all three fields. But it will take some time before IBM’s post-silicon future is upon us. “I would think it’s probably 2020 and beyond,” says Haensch. “Not earlier.”

More Must-Reads from TIME

- Caitlin Clark Is TIME's 2024 Athlete of the Year

- Where Trump 2.0 Will Differ From 1.0

- Is Intermittent Fasting Good or Bad for You?

- The 100 Must-Read Books of 2024

- Column: If Optimism Feels Ridiculous Now, Try Hope

- The Future of Climate Action Is Trade Policy

- FX’s Say Nothing Is the Must-Watch Political Thriller of 2024

- Merle Bombardieri Is Helping People Make the Baby Decision

Contact us at letters@time.com