A few months ago, I was set to begin a summer internship at Facebook. As a rising senior at Harvard University — the same school where Facebook founder and CEO Mark Zuckerberg sat in his dorm room and wrote the social media giant’s code — I was thrilled at the prospect of joining the “hacker culture” of Facebook where “code wins arguments.”

That forward-thinking “hacker culture” is how Facebook started. Can you imagine if Zuckerberg had gone to the school administration to ask permission to build Facebook in his dorm room? Luckily, he didn’t need permission. He built it, and his code won.

Before receiving this internship, I had taken a class called Privacy and Technology, taught by Professors James Waldo and Latanya Sweeney. In the course, I learned to question technology and its role in people’s lives. My experiences in this class, along with my interest in Facebook’s products, led me to code and release the Marauder’s Map extension, a plugin that allowed users of the Facebook Messenger app to see the geolocations of the people they were messaging. I had discovered that the Messenger app on Android automatically shared the geolocations of those using it with every message sent unless the user explicitly turned geolocation off.

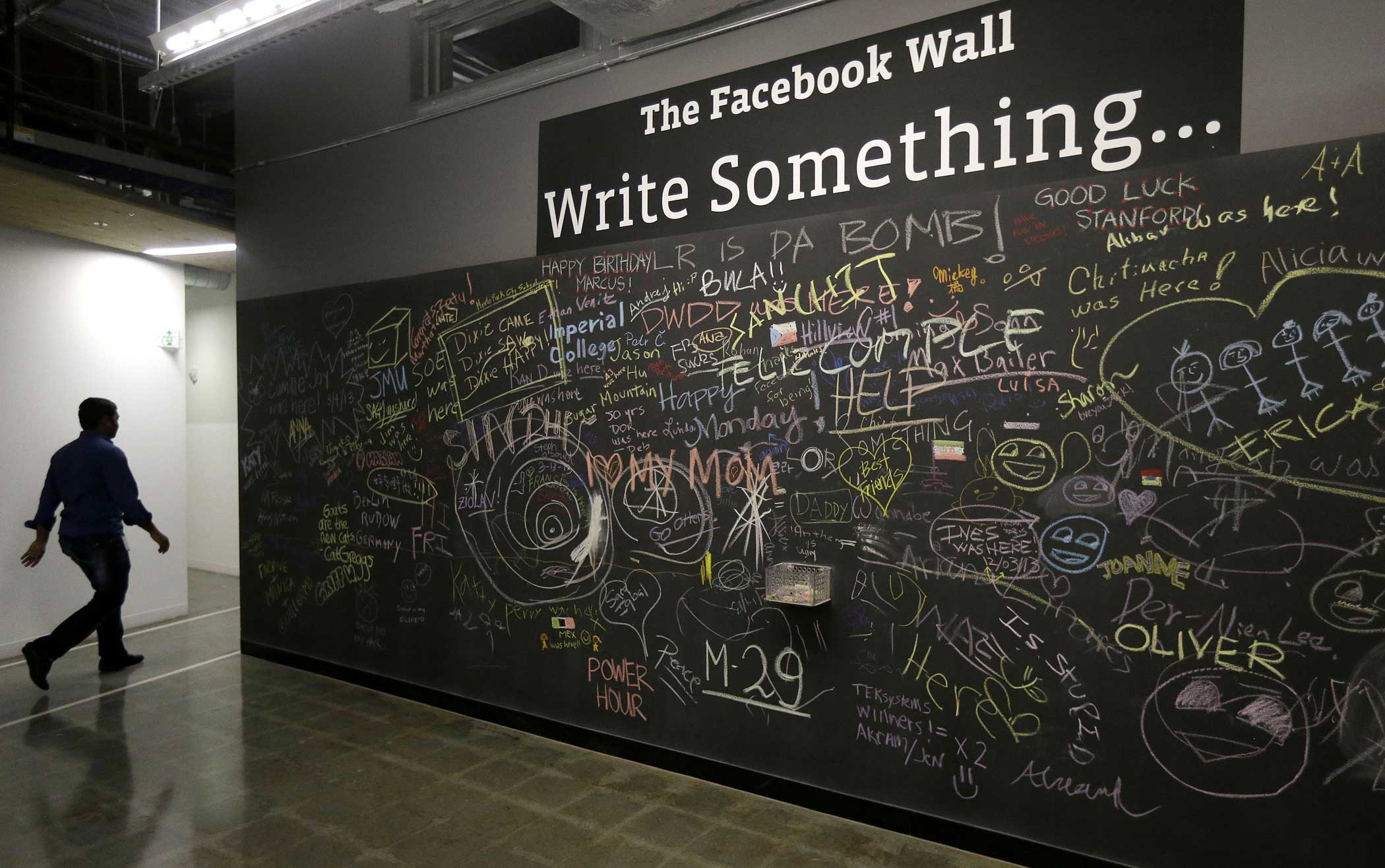

Check Out How Facebook’s Fancy New Digs Compare to Their Old HQ

Was the open sharing of personal locations a feature or a privacy issue? In the spirit of “hacker culture,” I attempted to answer this question with code, and wrote a post on Medium about my findings. This wasn’t a new concern: In 2012, CNET had brought this issue to Facebook’s and the public’s attention. My app wasn’t exploiting any trade secrets or breaking into anything. My app did nothing other than read data that was already on your screen and display it on a map (something anyone could do with a piece of paper, a pencil, and a little bit of time). For what it’s worth, Facebook disagreed, saying I violated the site’s user agreement by utilizing data taken from the site in my app.

I expected the blog post to circulate among a close circle of friends and maybe cause some people to take a second look at their location settings. To my surprise, the blog post resonated with people, and it spread quickly. Facebook took notice. An employee from the company asked me not to speak with the press and to deactivate the extension, which I did. I was both shocked and disappointed when a Facebook HR representative called the day before I was supposed to start my summer internship, after I had already moved to California, to inform me that my offer was being rescinded.

Shortly after, Facebook issued an update that reversed the way geolocation sharing worked on Facebook Messenger. Now, a person’s geolocation displays only if she actively elects to share it. (A spokesperson for Facebook told the press that the company had been working on this fix for some time.)

This episode has been an incredible learning opportunity, shedding light on how big tech firms respond to privacy issues and how that response may hurt users of their products. This was my main reason for publicly documenting this experience in my academic paper in the Harvard journal Technology Science.

So much of our lives have shifted online so quickly that it is not always clear how much data we are making accessible to others. This is made even more problematic by the fact that the companies tasked with holding and distributing our data are often incentivized to make us reveal as much as possible, while providing just enough privacy controls to ensure users don’t leave their platforms. Moving forward, will companies like Facebook be more aware of privacy concerns and more proactive in patching them? Or must we continue to rely on privacy guardians affecting change from the outside?

Read next: Mark Zuckerberg’s 3 Best Quotes on Facebook’s Future

Download TIME’s mobile app for iOS to have your world explained wherever you go

More Must-Reads from TIME

- How Donald Trump Won

- The Best Inventions of 2024

- Why Sleep Is the Key to Living Longer

- Robert Zemeckis Just Wants to Move You

- How to Break 8 Toxic Communication Habits

- Nicola Coughlan Bet on Herself—And Won

- Why Vinegar Is So Good for You

- Meet TIME's Newest Class of Next Generation Leaders

Contact us at letters@time.com