A new research paper released by Google this week reaffirms what’s been more or less obvious since HAL 9000 murdered four astronauts in Stanley Kubrick’s 1968 film 2001: A Space Odyssey: computers do not make particularly dependable friends.

The Google project—an admittedly more benign exercise than Kubrick’s—was yet another attempt to fashion a “conversational model” of artificial intelligence. Colloquially, we refer to these systems as “chatbots”—programs that can somewhat convincingly engage in human discourse.

The researchers behind the experiment, Oriol Vinyals and Quoc V. Le, programmed their particular chatbot with two sets of data: guidelines from an IT troubleshooting help desk and a database of movie subtitles. The ensuing dialogue was mostly awkward and occasionally nihilistic.

“What is the color of water?” the researchers asked.

“Water,” the computer replied simply.

“Where are you now?” they asked.

“I’m in the middle of nowhere.”

“Tell me the definition of morality,” the researchers requested.

“I don’t have ethics,” the machine advised them.

According to the transcript, this went on for awhile. Philosophical banter proving futile, Vinyals and Le switched gears, with more coherent results.

“What do you think about Cleopatra?” they asked.

“Oh, she’s very regal.”

Read more dialogue here.

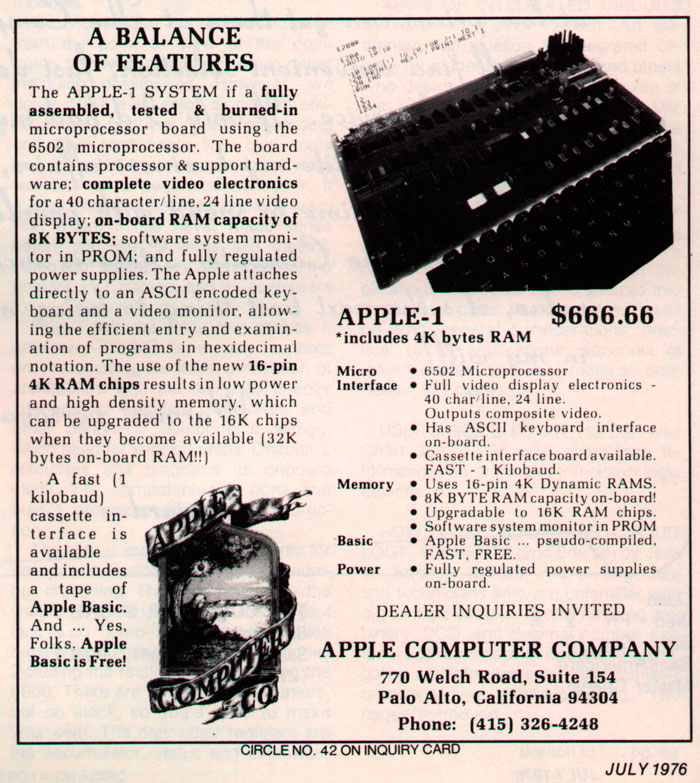

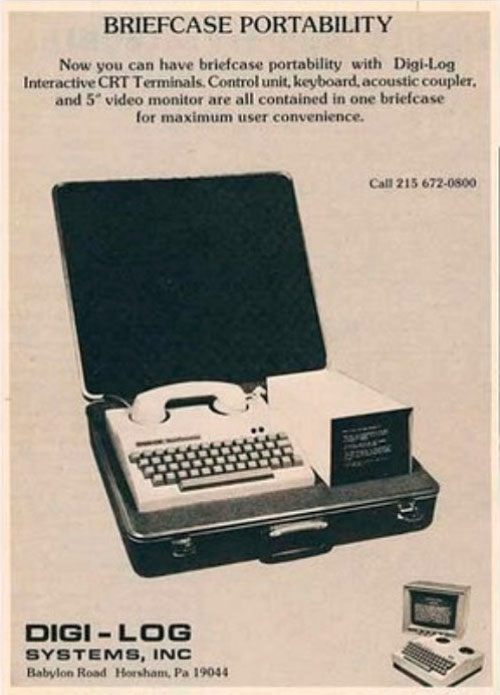

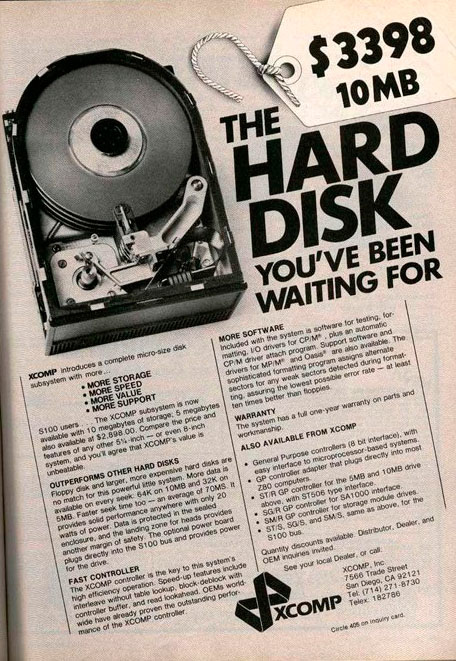

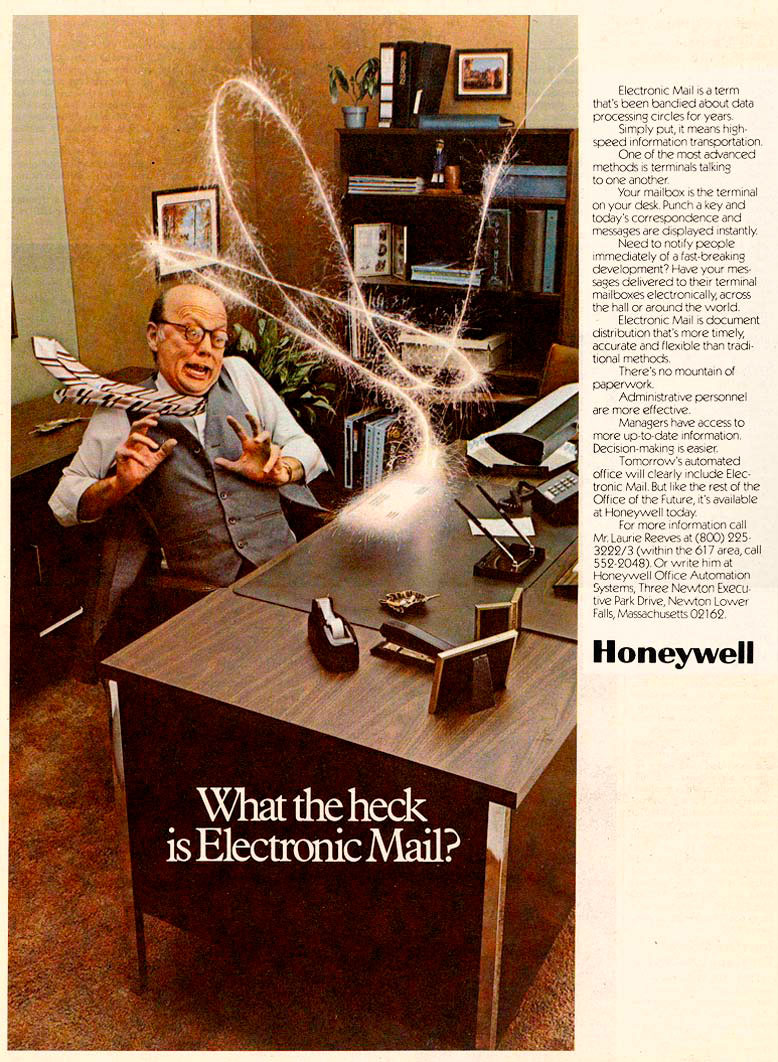

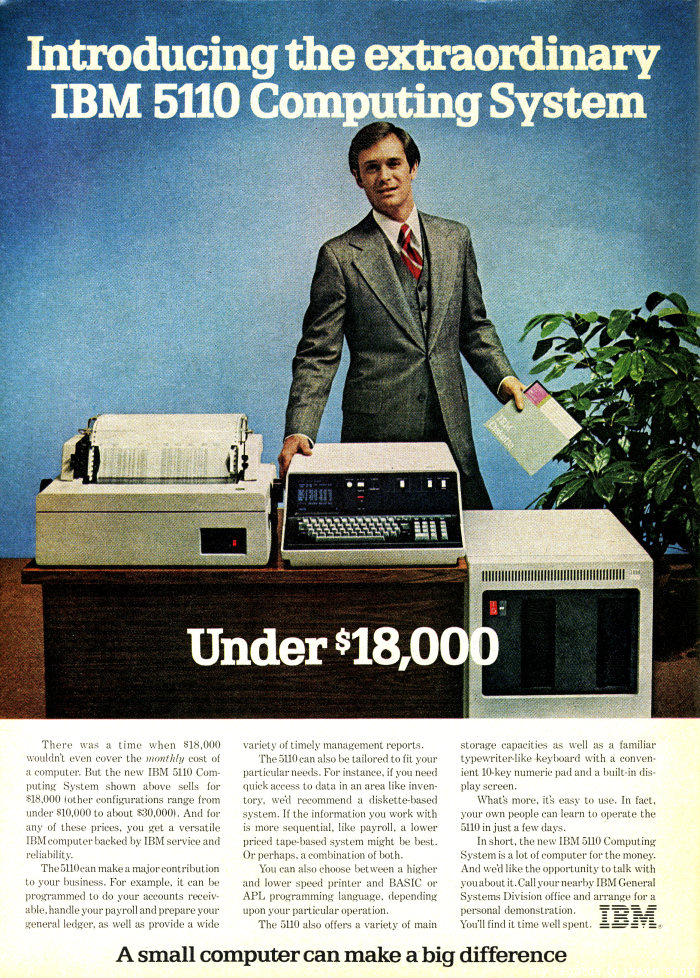

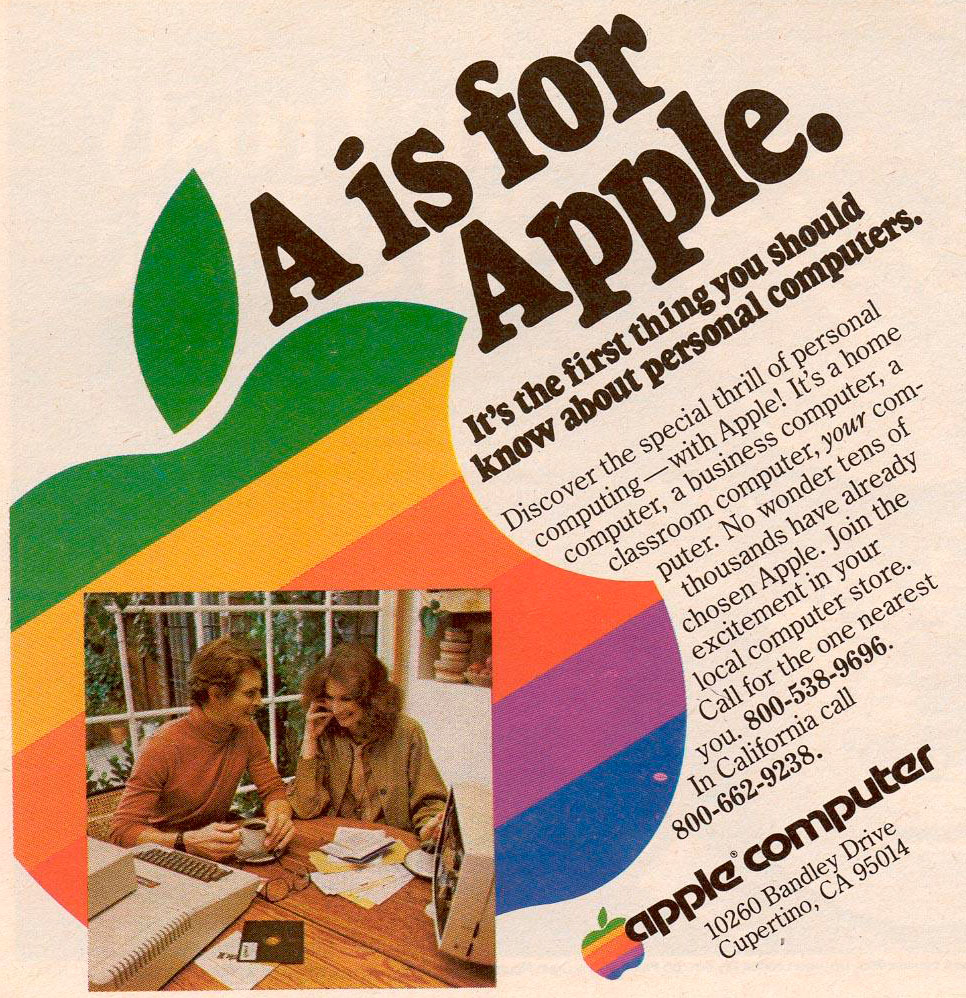

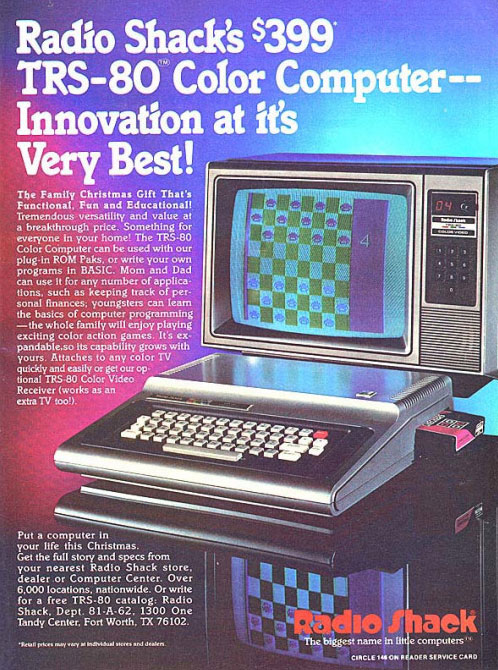

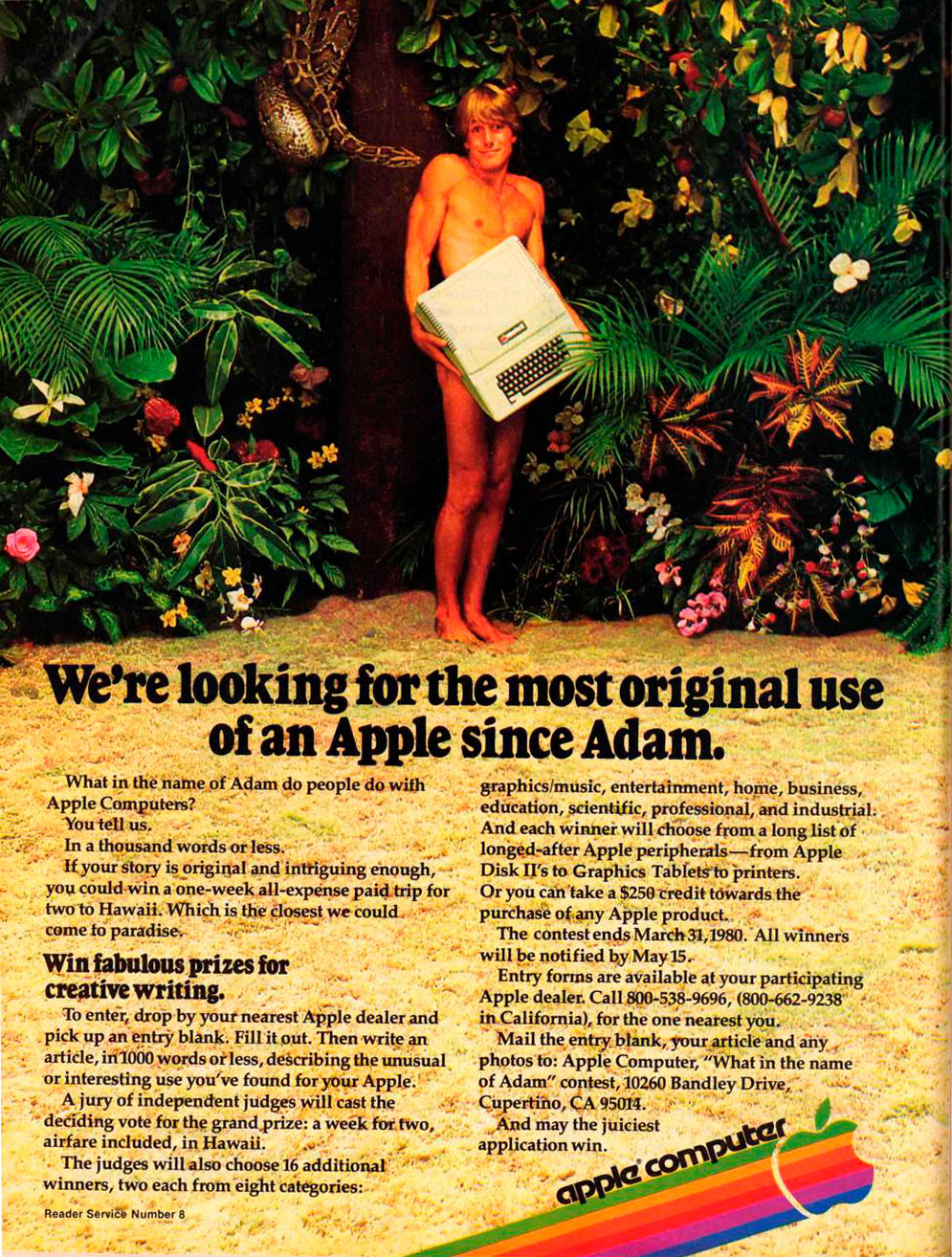

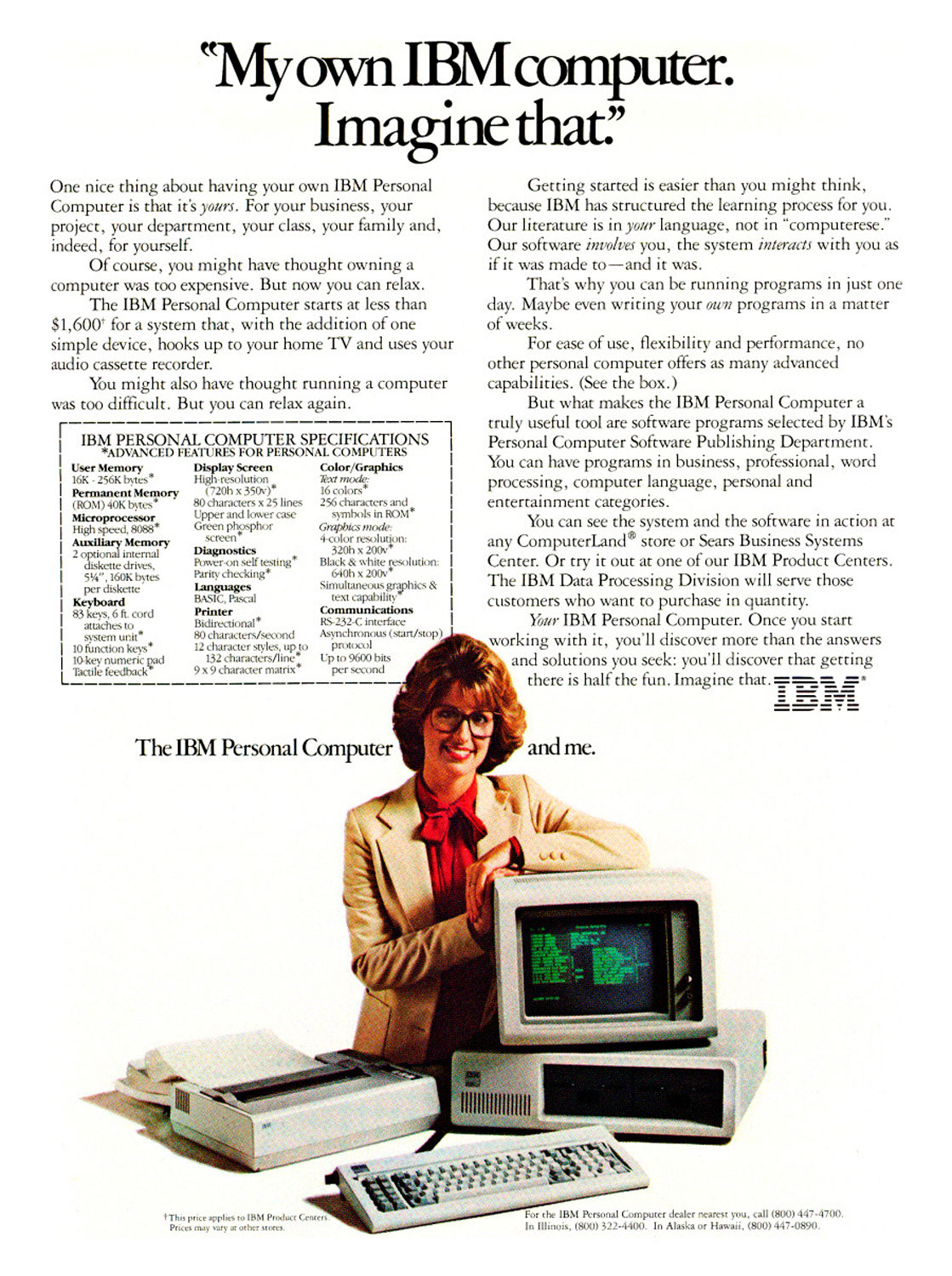

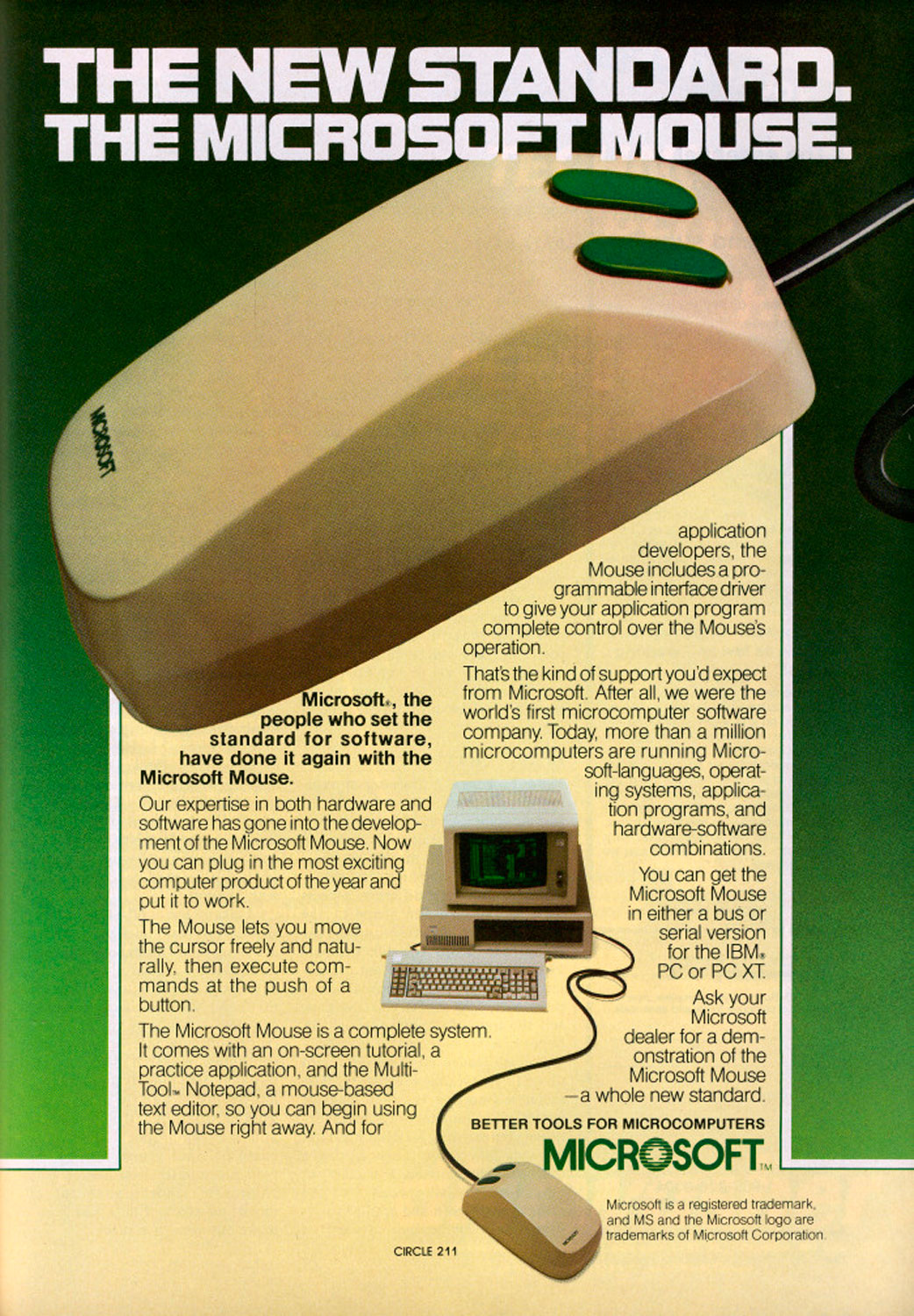

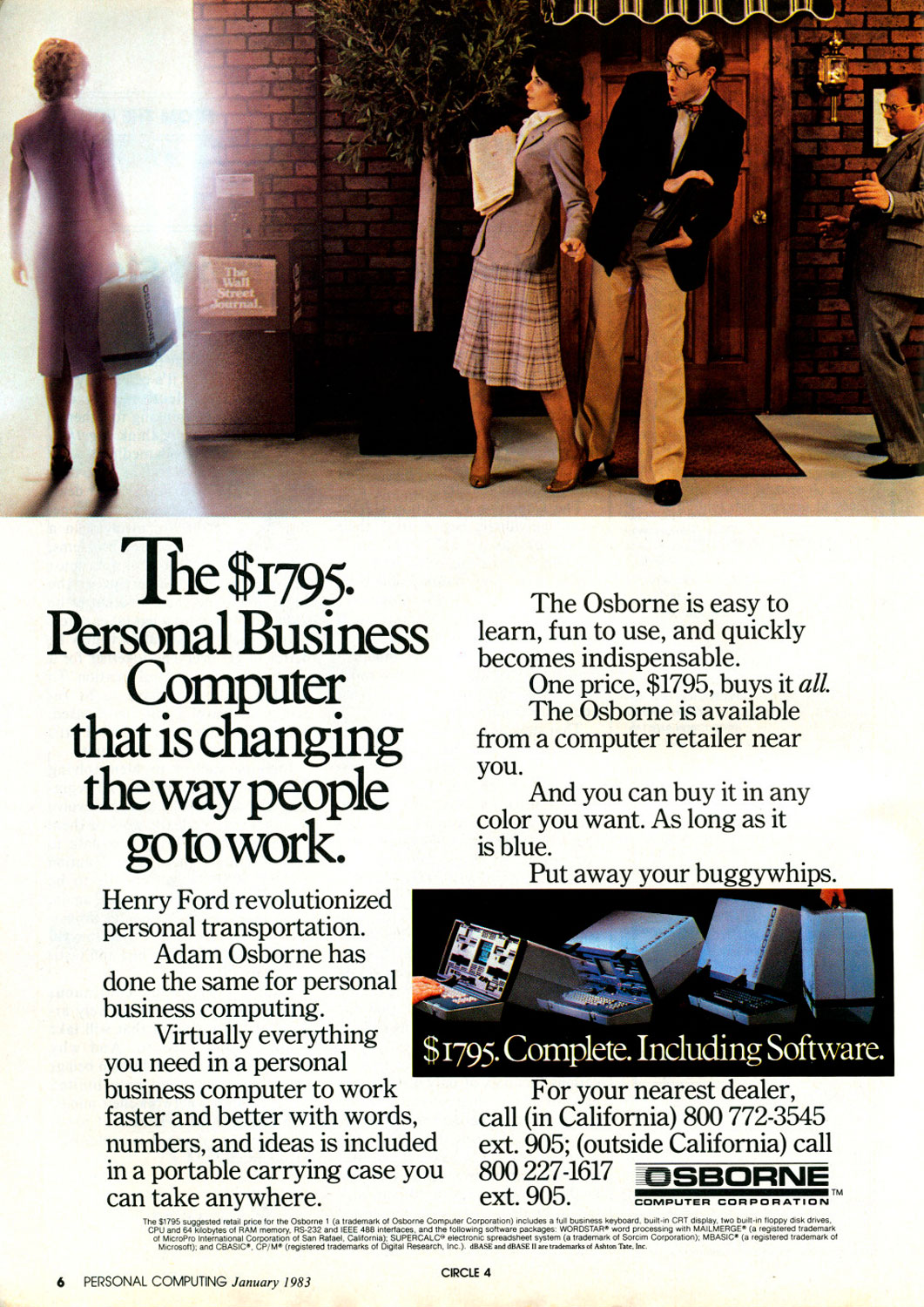

These Vintage Computer Ads Show We've Come a Long, Long Way

More Must-Reads From TIME

- The 100 Most Influential People of 2024

- Coco Gauff Is Playing for Herself Now

- Scenes From Pro-Palestinian Encampments Across U.S. Universities

- 6 Compliments That Land Every Time

- If You're Dating Right Now , You're Brave: Column

- The AI That Could Heal a Divided Internet

- Fallout Is a Brilliant Model for the Future of Video Game Adaptations

- Want Weekly Recs on What to Watch, Read, and More? Sign Up for Worth Your Time

Contact us at letters@time.com